First, apologies for such a long gap since my last blog post. Variety of reasons, including deep immersion in two Expanded Guides in quick succession (and I’ll come back to that soon, since they’ve given me a direct comparison of two major DSLR contenders). But you don’t need me bleating on about how busy I’ve been. I’ve also been trying to beef up my presence on Facebook and Twitter. But never mind that, let’s just get back to business.

One of the issues that has been very obvious working with the latest DSLRs is the tension that now exists between using the traditional viewfinder and using the LCD screen. I’ve been thinking quite a lot about this and what it may mean for the future of camera design. But let’s start at the beginning.

I cut my teeth on traditional 35mm SLRs, starting with a Zenith E that was probably assembled from spare parts lying around in a Russian tractor factory. Sorry, that’s unfair, it might look like a total clunker today but at the time it really felt like a ‘grown-up’ camera – and I still reckon that Helios lens wasn’t a bad performer. I went on through Yashicas and Contaxes before settling on Nikon 20 years ago in the shape of the classic FM2 – the apotheosis of the all-mechanical SLR. In fact I still have an FM2 at the back of the cupboard, though these days it only gets used on workshops for demonstrating a few things (it’s quite handy being able to open the back!). What all these cameras have in common is reflex viewing. And this is something they share with all the digital SLRs I’ve owned (Nikon D70, D2x, D700 and D7000) and the dozens that I’ve used.

Reflex viewing is often described as ‘through the lens’ viewing but this isn’t strictly true. On an SLR you don’t look through the lens. You actually view the image projected by the lens onto the focusing screen. You can see this screen if you take the lens off your DSLR and look up into the base of the pentaprism. The proof, if you like, that you aren’t simply looking through the lens (as if through a window) is that your eye never has to refocus on objects at different distances; the camera and lens do that instead.

Nevertheless, reflex viewing does feel very much like looking through a window, giving a sense of direct connection to the world. And this is the way that I’ve been used to working, with nearly every camera I’ve used for (OMG) almost four decades… ‘Through the lens’ (aka ‘eye-level’) viewing feels right and natural to me. And then the first digital cameras started appearing. Digital SLRs like the classic Nikon D1 (still, and possibly forever, the most significant digital camera ever made) of course relied on reflex viewing but we soon became accustomed to seeing people with digital compacts using the screen to frame their shots instead. In the early days this was making the best of a bad job; the screens were small and poor quality, but the viewfinders were usually even worse.

Now, of course, most digital compacts have much bigger and better screens and there isn’t room for a viewfinder at all. In fact there’s a whole generation of digital camera users for whom screen viewing is entirely the norm; they don’t miss the viewfinder at all. In fact if they trade up to a DSLR they have to get used to the completely new experience of raising the camera to their eye.

Yes, I know, most DSLRs now have Live View. But this wasn’t always true. Taking NIkon, for example (that being the range I know best), the first SLRs to have Live View were the D3 and D300, launched in August 2007 – less than four years ago. The D60 (Jan 08) and even the D3000 (Aug 09) didn’t have it; only since the arrival of the D3100 (late 2010) is it present across the entire range. When I first saw SLRs with Live View, I didn’t get it. To my nasty suspicious mind, this feature was merely pandering to people who’d got used to screen viewing on compact cameras and couldn’t adapt to ‘proper’ cameras.

However, because I had to write about every feature of these cameras, I couldn’t just ignore Live View, and once I started using it I began to see some genuine advantages. I think it’s worth listing the main ones.

1: 100% viewing.

It’s a lamentable fact that the majority of DSLR viewfinders do not show you 100% of the image. It’s much more usual to have around 95% linear coverage, meaning you only see 90% of the picture area. This may be a hangover from film days, when commercial printing often cropped the edges of the image (and slide mounts masked it slightly), but in digital SLRs there’s no such excuse. However, equipping every camera with a 100% viewfinder would add significantly to bulk, weight and cost. I wouldn’t like to state as a fact that every camera with Live View has 100% image coverage, but every DSLR that I’ve handled does. This means that, as far as framing is concerned, Live View on most cameras delivers ‘what you see is what you get’ and the viewfinder doesn’t.

2: Impeccable focusing.

In ‘normal’ use, i.e. using the viewfinder, DSLRs focus using sensors in the plane of the focusing screen, so they’re reliant on the image relayed by the reflex mirror. This is fine as long as the mirror is perfectly aligned and the image sensor is exactly where it should be. (So that light from the lens has to travel exactly the same distance to the image sensor, when the mirror flips up, as it did to the focusing sensor beforehand). The trouble is, there are lots of reports of cameras with some small misalignment somewhere – just enough to throw off the accuracy of focusing by a tiny amount. It’s usually not enough to be noticeable but can become evident at those times when there’s least margin for error, i.e. using extreme long lenses or in extreme close-up work. Live View focusing, on the other hand, works directly off the main image sensor so there’s no possibility of any such mismatch. In most cases you can also position the focus point exactly where you want it, so you can focus on exactly the right part of the subject – right down to a single eyelash if you’re so inclined. Of course, when you’re talking about this level of precision, any movement in either the camera or the subject is going to wipe it out, so this mostly applies to static subjects and a camera on a tripod. (It’s also the case that Live View focusing is a lot slower, but we’re focusing on its strengths here).

3: Depth of field preview.

Of course many SLRs have a traditional depth of field preview, whereby you press a button and the lens stops down to the actual shooting aperture. And we all know the limitations of this; it darkens the image, making it increasingly hard to assess what’s truly sharp and what isn’t quite pin.

Well, Live View, on some cameras at least, offers an alternative. You’ll have to check exactly how it applies on your DSLR. Let’s take the two cameras I’ve been working with recently. The Nikon D5100 stops down when you enter Live View, setting the lens at whatever aperture was dialled in beforehand. However, if you turn the dial to change the aperture while you’re in LV, it doesn’t update immediately, only when you take a shot.

Got that? Say you’ve set f/11 before entering LV. You look at the LV image, decide you need more depth of field and change the setting to f/22. The screen view doesn't change right away, but when you take the shot it’s taken at f/22 and when LV resumes you’re now viewing at f/22. You’ve got to say this is a quirk in Nikon’s delivery of Live View, and you’ve got to say that in this case Canon’s implementation is more logical, as – at least on the EOS 600D I’ve recently been using – LV updates continually to reflect changes in aperture, providing a full-time, genuinely live, depth of field preview. In fact Canon touts its ‘Final Image Simulation’ as giving you an accurate preview on brightness and colour balance too. Points to Canon on that count (for my overall verdict on the two cameras, watch this space).

OK, that’s at least three big reasons why Live View might be preferable over the viewfinder, in some circumstances anyway. But there are also some very significant downsides.

1: Handling.

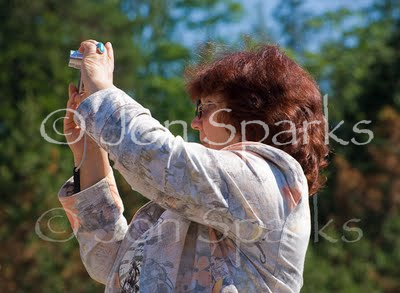

The ‘praying mantis’ posture is now characteristic of compact camera users. The problem is, holding the camera away from you is inherently less stable than holding it to your eye. This is true with lightweight compacts and even more so with heavier, bulkier SLRs. This greatly increases the incidence of camera shake. Yes, we now have the wonders of Image Stabilisation/Vibration Reduction or whatever the other makers call it, but in a lot of cases this extra tech is doing little more than cancel out the extra wobbliness due to a less stable way of holding the camera. In other words, it takes us (more or less) back to square one.

2: Speed.

On SLRs Live View is significantly slower than viewfinder shooting, for at least two main reasons. One is that focusing off the main sensor, though accurate, is also much slower than using a dedicated focusing sensor. The second is that – at best – the shutter has to close to end Live View and then open again to take the shot. This inevitably means a delay; you can hear it. If you use a continuous shooting mode, the mirror usually stays up between shots. This means that neither the screen nor the viewfinder is any use, so how do you follow a moving subject? The clear upshot of this is that Live View is seriously hamstrung when it comes to shooting moving subjects.

3: Visibility.

Screens have got better, but even the best of them are hard to see clearly in bright sunlight. You can get screen shades and even viewing loupes which at least partly alleviate this problem, but it’s extra clutter and extra faff. They’re a very good idea if you’re doing, say, sustained macro shooting on a tripod within a limited area, but less so if you’re moving around a lot and even less convenient if you’re switching regularly between Live View and viewfinder shooting.

4. Connection.

Live View gives you a different kind of view. I know that I observed early on that the viewfinder doesn’t deliver a genuine through the lens view; you are looking at the focusing screen. However, it feels direct; it feels connected to the world. By putting the camera right up to your eye it takes over your visual field and, in a strange way, almost disappears. When you use the screen, holding the camera out in front of you, it can seem more like a barrier between you and the world. To me, the Live View view is ultimately more detached and less immediate than the reflex view.

I’m aware that this last point is more subjective, but the other three are clear objective limitations to the usefulness of Live View on a digital SLR. It’s possible, in fact probable, that technology will improve matters on at least the speed and visibility counts, but there’s not going to be much that can be done about handling. The benefits of larger sensors in SLRs (and other system cameras) are undeniable, but they also mean that lenses are always going to be physically a certain size. With longer lenses in particular, handling in Live View is fundamentally unwieldy. This is not just an SLR thing. I’ve tried one of Sony’s NEX cameras (mirrorless, full-time Live View) with an 80-200mm lens and it was barely the right side of completely impractical. I am pretty certain that 200mm is about the limit for Live View handling, other than on a tripod.

So… we have three pretty big positives for Live View, and three pretty big negatives against it, plus a fourth on a more personal level. Which is why I opened this piece by referring to a tension between using the viewfinder and using the screen.

So how do we resolve this? Right now, assuming we are committed SLR users, the answer is that we use each approach when it’s most appropriate.

Viewfinder:

whenever speed is important, whether it’s a need for the focusing system to track moving subjects or just our own ability to react quickly;

whenever we’re using longer and/or heavier lenses, except on a tripod;

whenever we’re concerned about camera-shake, i.e. hand-holding at slower shutter speeds;

whenever we want that intimate sense of filling our personal field of view with the image.

Live View:

whenever we want to frame a shot carefully (probably on a tripod) using 100% of the image area. (This will not apply with pro-spec DSLRs which give 100% coverage in the viewfinder too);

whenever we want ultra-precise focusing on static subjects;

whenever we want a clear and readable depth of field preview.

This is certainly where I’m at right now. I still regard viewfinder shooting as normal but I’ve long ceased looking on Live View as a mere gimmick and I now use it routinely in situations where it shines, especially for macro photography.

But this is a compromise, isn’t it? We now have cameras with two fundamentally different, and arguably incompatible viewing systems, shoe-horned into one body. This can’t be the best of all possible worlds. What might the answer be and what are camera makers doing about it? Well, that’s what I’ll turn to in the next thrilling installment.